Prologue

This is a miniseries dedicated to the memory of my first reading of Bostrom’s new book, “Deep Utopia,” which—somewhat contrary to his intentions—I found very disturbing and irritating. Bostrom, who considers himself a longtermist, intended to write a more light-hearted book after his last one, “Superintelligence,” which should somehow give a positive perspective on the positive outcome of a society that reaches technological maturity. A major theme in Bostrom’s writings circles around the subject of existential risk management; he is among the top experts in the field.

“Deep Utopia” can be considered a long-winded essay about what I would call existential bliss management: Let us imagine everything in humanity’s ascension to universal stardom goes right and we reach the stage of Tech-Mat Bostrom coins the term “plasticity” for, then what? Basically, he just assumes all the upsides of the posthumanist singularity, as described by proponents like Kurzweil et al., come true. Then what?

To bring light into this abyss, Bostrom dives deep down to the Mariana Trench of epistemic futurology and finds some truly bizarre intellectual creatures in this extraordinary environment he calls Plastic World.

Bostrom’s detailed exploration of universal boredom after reaching technological maturity is much more entertaining than its subject would suggest. Alas, it’s no “Superintelligence” barn burner either.

He chooses to present his findings in the form of a meta-diary, structuring his book mainly via days of the week. He seems to intend to be playful and light-hearted in his style and his approach to the subject. This is a dangerous path, and I will explain why I feel that he partly fails in this regard. This is not a book anyone will have real fun reading. Digesting the essentials of this book is not made easier by the meta-level and self-referential structure where the main plot happens in a week during Bostrom’s university lectures. The handouts presented during these lectures are a solid way to give the reader an abstract. There is plenty to criticize about the form Bostrom chose, but it’s the quality, the depth of the thought apparatus itself that demands respect.

Then there is a side story about a pig that’s a philosopher, a kind of “Animal Farm” meets “Lord of the Flies” parable that I never managed to care for or see how it is tied to the main subject. A kind of deep, nerdy insider joke only longtermist Swedish philosophers might grasp.

This whole text is around 8,500 words and was written consecutively. The splitting into multiple parts is only for the reader’s convenience. The density of Bostrom’s material is the kind you would expect exploring such depths. I am afraid this text is also not the most accessible. Only readers who have no aversions to getting serious intellectual seizures should attempt it. All the others should wait until we all have an affordable N.I.C.K. 3000 mental capacity enhancer at our disposal.

PS: A week after the dust of hopelessness I felt directly after the reading settled, I can see now how this book will be a classic in 20 years from now. Bostrom, with the little lantern of pure reasoning, went deeper than most of his contemporaries when it comes to cataloging the strange creatures that are at the bottom of the deep sea of the solved world.

Handout 1: The Cosmic Endowment

The core information of this handout is that a technologically advanced civilization could potentially create and sustain a vast number of human-like lives across the universe through space colonization and advanced computational technologies. Utilizing probes that travel at significant fractions of the speed of light, such a civilization could access and terraform planets around many stars, further amplifying their capacity to support life by creating artificial habitats like O’Neill cylinders. Additionally, leveraging the immense computational power generated by structures like Dyson spheres, it’s possible to run simulations of human minds, leading to the theoretical existence of a staggering number of simulated lives. This exploration underscores the vast potential for future growth and the creation of life, contingent upon technological progress and the ethical considerations of simulating human consciousness. It is essentially a longtermist’s numerical fantasy. The main argument, and the reason why Bostrom writes his book, is here:

If we represent all the happiness experienced during one entire such life with a single teardrop of joy, then the happiness of these souls could fill and refill the Earth’s oceans every second, and continue doing so for a hundred billion billion millennia. It is really important that we ensure these truly are tears of joy.

Bostrom, Nick. *Deep Utopia: Life and Meaning in a Solved World* (English Edition), p. 60.

How can we make sure? We can’t, and this is a real hard problem for computationalists like Bostrom, as we will find out later.

Handout 2: CAPS AT T.E.C.H.M.A.T.

Bostrom gives an overview of a number of achievements at Technological Maturity (T.E.C.H.M.A.T.). for different Sectors.

1 Transportation

2.Engineering of the Mind

3.Computation and Virtual Reality

4.Humanoid and other robots

5.Medicine & Biology

6.Artificial Intelligence

7.Total Control

The illustrations scattered throughout this series provide an impression. Bostrom later gives a taxonomy (Handout 12, Part 2 of this series), where he delves deeper into the subject. For now, let’s state that the second sector, Mind-engineering, will play a prominent role, as it is at the root of the philosophical meaning problem.

Handout 3: Value Limitations

Bostrom identifies six different domains where, even in a scenario of limitless abundance at the stage of technological maturity (Tech-Mat), resources could still be finite. These domains are:

- Positional and Conflictual Goods: Even in a hyperabundant economy, only one person can be the richest person; the same goes for any achievement, like standing on the moon or climbing a special mountain.

- Impact: A solved world will offer no opportunities for greatness.

- Purpose: A solved world will present no real difficulties.

- Novelty: In a solved world, Eureka moments, where one discovers something truly novel, will occur very sporadically.

- Saturation/Satisfaction: Essentially a variation on novelty, with a limited number of interests. Acquiring the nth item in a collection or the nth experience in a total welfare function will yield ever-diminishing satisfaction returns. Even if we take on a new hobby or endeavor every day, this will be true on the meta-level as well.

- Moral Constraints: Ethical limitations that remain relevant regardless of technological advances.

Handout 4 & 5: Job Securities, Status Symbolism and Automation Limits

The last remaining tasks that humans could be favored to do are jobs that bring the employer or buyer status symbolism, where humans are simply considered more competent than robots. These include emotional work like counseling other humans or holding a sermon in a religious context.

Handout 9: The Dangers of Universal Boredom

(…) as we look deeper into the future, any possibility that is not radical is not realistic.

Bostrom, Nick. Deep Utopia: Life and Meaning in a Solved World (English Edition) (S.129).

The four case studies: In a solved world, every activity we currently value as beneficial will lose its purpose. Then, such activities might completely lose their recreational or didactic value. Bostrom’s deep studies of shopping, exercising, learning, and especially parenting are devastating under his analytical view.

Handout 10: Downloading and Brain Editing

This is the decisive part that explains how Autopotency is probably one of the hardest and latest Capabilities a Tech-Mat Civilization will develop.

Bostrom goes into detail how this could be achieved, and what challenges to overcome to make such a tech feasible:

Unique Brain Structures: The individual uniqueness of each human brain makes the concept of “copy and paste” of knowledge unfeasible without complex translation between the unique neural connections of different individuals.

Communication as Translation: the imperfect process of human communication is a form of translation, turning idiosyncratic neural representations into language and back into neural representations in another brain.

Complexity: Directly “downloading” knowledge into brains is hard since billions or trillions of cortical synapses and possibly subcortical circuits for genuine understanding and skill acquisition have to be adjusted with femtoprecision.

Technological Requirements: Calculating synaptic changes needs many order of magnitudes more we might have to our use, these Requirements are potentially AI-complete, that means, if we can do them we need Artificial Super Intelligence first.

Superintelligent Implementation: Suggests that superintelligent machines, rather than humans, may eventually develop the necessary technology, utilizing nanobots to map the brain’s connectome and perform synaptic surgery based on computations from an external superintelligent AI.

Replicating Normal Learning Processes: to truly replicate learning, adjustments would need to be made across many parts of the brain to reflect meta learning, formation of new associations, and changes in various brain functions, potentially involving trillions of synaptic weights.

Ethical and Computational Complications: potential ethical issues and computational complexities in determining how to alter neural connectivity without generating morally relevant mental entities or consciousness during simulations.

Comparison with Brain Emulations: transferring mental content to a brain emulation (digital brain) might be easier in some respects, such as the ability to pause the mind during editing, but the computational challenges of determining which edits to make would be similar.

Handout 11: Experience Machine

A variation on Handout 10: Instead of directly manipulating the physical brain, we have perfected simulating realities that give the brain the exact experience it perceives as reality (see Reality+, Chalmers). This might actually be a computationally less demanding task and could be a step on the way to real brain editing. Bostrom takes Nozick’s thought experiment and examines its implications.

Section a discusses the limitations of directly manipulating the brain to induce experiences that one’s natural abilities or personality might not ordinarily allow, such as bravery in a coward or mathematical brilliance in someone inept at math. It suggests that extensive, abrupt, and unnatural rewiring of the brain to achieve such experiences could alter personal identity to the point where the resulting person may no longer be considered the same individual. The ability to have certain experiences is heavily influenced by one’s existing concepts, memories, attitudes, skills, and overall personality and aptitude profile, indicating a significant challenge to the feasibility of direct brain editing for expanding personal experience.

Section b highlights the complexity of replicating experiences that require personal effort, such as climbing Mount Everest, through artificial means. While it’s possible to simulate the sensory aspects of such experiences, including visual cues and physical sensations, the inherent sense of personal struggle and the effort involved cannot be authentically reproduced without inducing real discomfort, fear, and the exertion of willpower. Consequently, the experience machine may offer a safer alternative to actual physical endeavors, protecting one from injury, but it falls short of providing the profound personal fulfillment that comes from truly overcoming challenges, suggesting that some experiences might be better sought in reality.

Section c is about social or parasocial interactions within these Experience machines. The text explores various methods and ethical considerations for creating realistic interaction experiences within a hypothetical experience machine. It distinguishes between non-player characters (NPCs), virtual player characters (VPCs), player characters (PCs), and other methods such as recordings and guided dreams to simulate interactions:

1. NPCs are constructs lacking moral status that can simulate shallow interactions without ethical implications. However, creating deep, meaningful interactions with NPCs poses a challenge, as it might necessitate simulating a complex mind with moral status.

2. VPCs possess conscious digital minds with moral status, allowing for a broader range of interaction experiences. They can be generated on demand, transitioning from NPCs to VPCs for deeper engagements, but raise moral complications due to their consciousness.

3. PCs involve interacting with real-world individuals either through simulations or direct connections to the machine. This raises ethical issues regarding consent and authenticity, as real individuals or their simulations might not act as desired without their agreement.

4. Recordings offer a way to replay interactions without generating new moral entities, limiting experiences to pre-recorded ones but avoiding some ethical dilemmas by not instantiating real persons during the replay.

5. Interpolations utilize cached computations and pattern-matching to simulate interactions without creating morally significant entities. This approach might achieve verisimilitude in interactions without ethical concerns for the generated beings.

6. Guided dreams represent a lower bound of possibility, suggesting that advanced neurotechnology could increase the realism and control over dream content. This raises questions about the moral status of dreamt individuals and the ethical implications of realistic dreaming about others without their consent.

to be continued

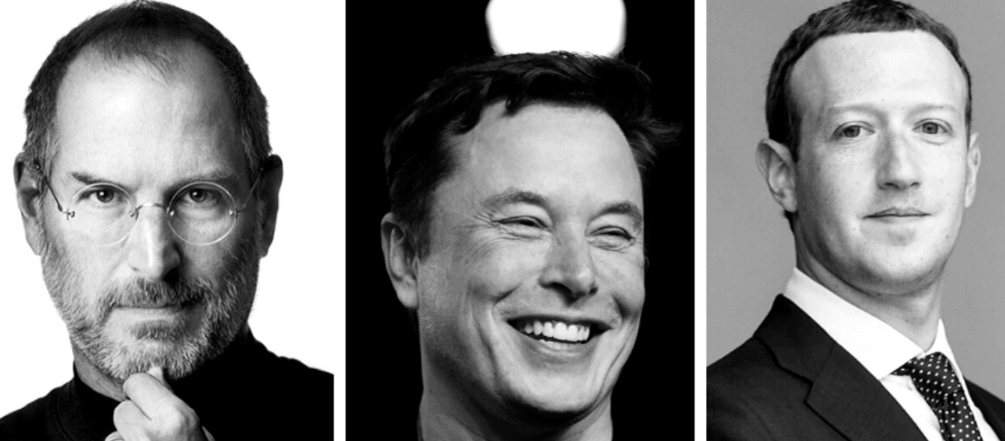

Insomnia for example might become a socially accepted phenomenon, because sleep and rest are the enemy of any attention economy. The ADE is the New York of economy. Its natural habitat is 24/7 on 365 days a year. An always-on mind like the one from an entrepreneur like Elon Musk is already hailed as the pinnacle of human intellectual capacity and it becomes more and more socially acceptable that these ADE driven minds use

Insomnia for example might become a socially accepted phenomenon, because sleep and rest are the enemy of any attention economy. The ADE is the New York of economy. Its natural habitat is 24/7 on 365 days a year. An always-on mind like the one from an entrepreneur like Elon Musk is already hailed as the pinnacle of human intellectual capacity and it becomes more and more socially acceptable that these ADE driven minds use

Fiction and Reality

Fiction and Reality